The problem of earthquake prediction can be reformulated, in more rigorous terms, as the problem of triggering: understanding why a geological system that accumulates energy over long periods releases that energy at a specific moment and in a particular area. Plate tectonics provides a solid and widely accepted explanation for the process of energy accumulation, describing the relative motion of plates and the stresses that develop along faults. However, it does not fully explain the exact timing of triggering. This does not represent a weakness of the theory, but rather an indication that seismic phenomena result from a combination of necessary physical conditions, none of which appears sufficient on its own to explain the event. In this perspective, earthquakes do not occur randomly, but take place when a set of necessary physical conditions converges toward a critical threshold, without any single one being sufficient by itself to explain the triggering.

The research developed within the NCGT/Geoplasma framework fits precisely into this theoretical space: not as an alternative to classical geophysics, but as a complement, introducing those factors that may help explain the timing of seismic triggering.

At the foundation of the different physical and observational levels of research on geophysical processes related to seismic activity and Earth–Space interactions, and as a quasi-programmatic reference for their interdisciplinary integration, Gregori (2018) introduces a central epistemological dimension to the study of natural catastrophes. His work highlights that investigating precursors is not only a scientific issue, but also a matter of responsibility: when observable signals exist—even if partial—ignoring them is not merely cautious, but may represent a limitation of scientific inquiry.

A key point is the distinction between deterministic prediction and probabilistic forecasting. The former, understood as a unique and unambiguous determination of time, location, and magnitude, is in practice unrealistic; the latter, although incomplete, represents a form of operational knowledge that is both possible and necessary. Gregori notes that mainstream science often avoids the use of incomplete information, favoring formally rigorous but less applicable models. Instead, it is necessary to work with partial information, accepting uncertainty. This leads to a fundamental principle: a forecasting system does not need to be perfect, but it must be useful. Even imperfect forecasts can improve decisions and contribute to saving human lives.

In this perspective, the role of the researcher extends beyond description to risk management, reinforcing the idea that earthquakes are the result of complex multi-scale processes in which multiple necessary conditions converge.

The contribution of Straser operates at the most direct and physical level of observation. Its objective is to identify natural electromagnetic signals associated with the stress state of faults prior to rupture.

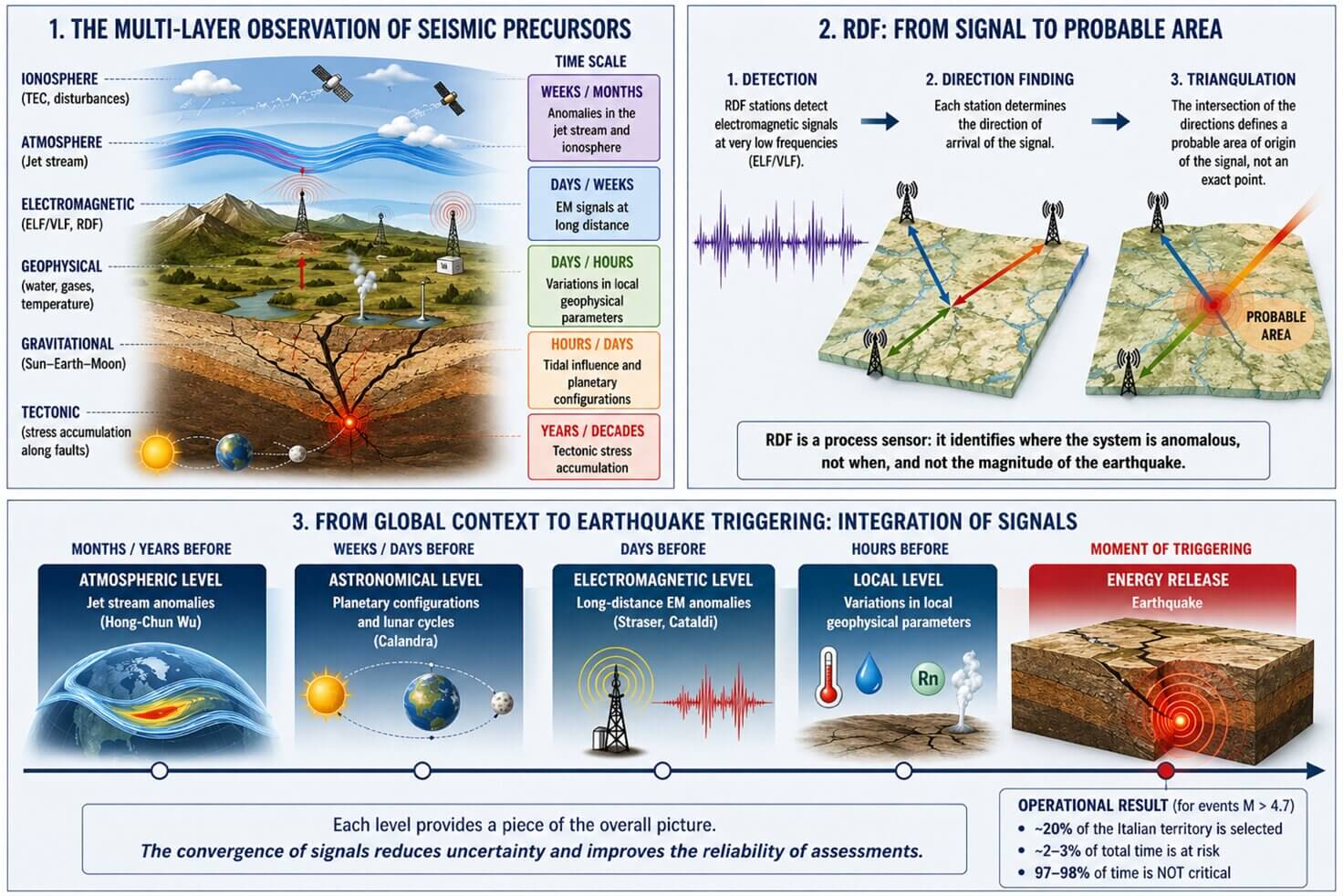

From an operational standpoint, the method is based on a continuous monitoring network composed of ELF/VLF antennas, high-sensitivity radio receivers, and time synchronization systems, often GPS-based. These instruments make it possible to detect very low-frequency signals capable of propagating over long distances both within the Earth’s crust and through the Earth–ionosphere cavity.

A key element of this system is RDF (Radio Direction Finding), which does not merely detect the presence of a signal but determines its direction of origin. This makes it possible, at least in theory, to perform triangulation when multiple stations are available, although in practice the result is always a probabilistic area rather than a precise point, since the signal originates from an extended zone of the fault. RDF is not a sensor of the final event, but a directional anticipatory sensor of the ongoing process.

Observations collected by Straser in various regions, including Italy and Greece, show recurring electromagnetic anomalies in the hours or days preceding earthquakes. These anomalies appear as increases in intensity, frequency variations, or changes in signal patterns, and are often accompanied by visual phenomena such as earthquake lights, interpreted as manifestations of the same physical process. However, the author himself recognizes that such signals are not univocal and may be influenced by various noise sources, which is why the system cannot be considered predictive in a strict sense, but rather a sensor of the ongoing process.

The work of Cataldi represents an operational evolution of the electromagnetic approach. Here too, the instrumental base consists of ELF/VLF antennas and RDF systems, but the focus is more directly on the temporal evolution of the signal and its interpretation within a physical framework.

In the case of the Campi Flegrei, electromagnetic signals were observed up to three days prior to a magnitude 4.6 event, with a progressive increase in intensity and a direction consistent with the epicentral area, despite the measurement station being located more than one hundred kilometers away. This demonstrates that the signal is not strictly local, but propagated.

The specificity of this approach lies in its interpretative integration with tidal factors. Cataldi observes that certain seismic events occur in conjunction with particularly strong Sun–Earth–Moon configurations, suggesting that electromagnetic signals represent the state of the fault, while gravitational forcing may contribute to the timing of the release.

The geographical scope is primarily Italian, with a focus on volcanic and tectonically active areas, and an approach based on case studies rather than large statistical datasets.

The contribution of Hong-Chun Wu introduces a completely different perspective, shifting attention from within the Earth’s crust to the upper atmosphere. The objective is to identify atmospheric precursor signals, particularly within the jet stream, that may indicate a system perturbation prior to an earthquake.

The instruments used are not local but global: satellite data and meteorological models such as NOAA and ECMWF, which allow monitoring of jet stream dynamics at altitudes of approximately 9–10 km. The method consists of identifying anomalies such as deviations, stagnation, or discontinuities in the jet stream and comparing them with subsequent seismic events.

The cases analyzed include earthquakes in Chile, Japan, Italy, and Alaska, showing spatial coherence on the order of approximately 100 km. What emerges is that these anomalies may appear weeks or even months before the event, consistently above the future epicentral area.

In this perspective, the earthquake leaves an atmospheric signature before manifesting in the crust.

This type of signal operates on a medium- to long-term timescale and is not useful for immediate prediction, but provides valuable insight into the global state of the system.

The work of Leybourne stands out for its theoretical ambition: to construct a unified model capable of integrating all observed signals. The starting point is that the Earth is not an isolated system, but is electromagnetically coupled with the Sun.

The “stellar transformer” model describes the Sun–Earth system as an electrical circuit in which variations in the solar field induce currents within the Earth, modulating the energy available for seismic processes. In this framework, the Sun acts as the primary coil and the Earth as the secondary coil.

A particularly relevant aspect of the model is the observation that large earthquakes tend to occur during solar minima, when the system transitions from a relatively stable accumulation phase to a release phase. This is complemented by the concept of energy transmigration, according to which energy does not accumulate locally alone, but migrates over time and space along deep geological structures, with an estimated velocity of approximately 0.15 km per day.

From an operational standpoint, Leybourne does not rely on a single instrument but integrates data from multiple domains, including electromagnetic (RDF), atmospheric (jet stream), ionospheric (TEC), and geophysical signals, building a multi-level temporal structure ranging from years to hours prior to the event.

The earthquake is not a sudden event, but the final stage of an evolving process distributed in time and space.

The contribution of Calandra represents the transition from theory to operational implementation. The EqForecast model is a probabilistic multi-algorithm system that integrates astronomical configurations, lunar cycles, and statistical seismic memory to estimate when, where, and how strong an earthquake may be.

The tools are primarily computational: calculation of angular distances between planets using astronomical models, statistical analysis of historical seismicity, and integration with real-time micro-seismic data.

The model is applied operationally to Italy, with a dataset covering the period from 1985 to the present and produces a progressive selection of both time and space. For events with magnitude greater than 4.7, the system reduces the area of risk to approximately 20% of the national territory and concentrates the temporal exposure to about 2–3% of total time.

For 97–98% of the time, the user is not in a critical condition.

This result represents an operational reduction of perceived risk and a significant step toward systems that can be used in real-world conditions.

From an operational perspective, the different approaches involve significantly different cost structures. RDF networks require investment per station ranging from a few thousand euros for experimental setups to tens of thousands for advanced systems, with total costs for a distributed network reaching substantial levels. Additional costs include maintenance, data management, and analytical processing.

Atmospheric models, relying on existing satellite data, involve lower direct costs but require analytical expertise and computational infrastructure. Systems such as EqForecast involve software development, maintenance, and digital infrastructure management costs.

Overall, an integrated system would likely require initial investments on the order of several hundred thousand euros, along with ongoing annual operational costs, but these are compatible with structured research initiatives.

When considered together, these approaches do not appear contradictory but rather describe different levels of the same phenomenon. Electromagnetic signals represent the local and immediate level, atmospheric signals provide medium-term context, astronomical configurations define temporal modulation, and the electrodynamic model offers a unifying theoretical framework.

We are witnessing a process of scientific convergence, in which different models begin to interact and integrate, each contributing to the description of a different aspect of seismic processes.

The upcoming meeting in Parma represents a unique opportunity to transform this theoretical convergence into a concrete operational proposal. One possible direction would be the development of an integrated multi-parameter platform in which the various models are operationally combined.

In such a framework, the EqForecast model could provide the foundational temporal and spatial structure, while RDF networks could add real-time electromagnetic monitoring. Atmospheric data could be used to identify areas of susceptibility on longer timescales, and Leybourne’s model could offer the theoretical framework for interpreting the interaction between these levels.

A pilot project could initially be developed in Italy, where data, operational models, and case studies are already available, with the aim of testing the integration of different signals and evaluating its effectiveness.

The transition from isolated models to integrated systems represents the true frontier of earthquake forecasting research.

If pursued, Parma 2026 could mark not only a moment of scientific exchange, but the beginning of a new operational phase in understanding and managing seismic risk.